Concurrency: How many spiders can concurrently scrape the target.Designing a configurable spider with the following options will reduce unnecessary network blockages and improve the overall spider reliability. Refer to A reliable web scraping robot – design Insights for more details on spider development. Scraping Robot Solution - AWS Architecture ComponentsĪ spider is responsible for scraping the required contents of a targeted website.

The rest of the document will discuss the Architecture and essential components required to deploy a scraping solution using AWS cloud-native resources. Some of the elements are RabbitMQ, BeautifulSoup, Scrapy, MongoDB, influx dB, Postgress, Nagios, and Python-Django.

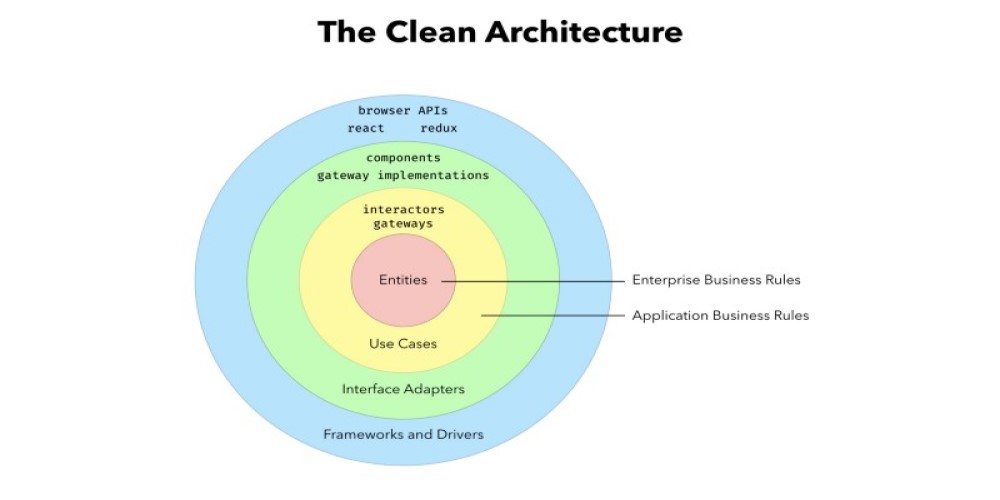

One can take open-source components to build the proposed solution. Scraping Robot Solution - Generic Architecture The proposed architecture cleanly decouples activities using a message broker, which results clean code and good operations visibility into the system. The diagram given below outlines a minimally viable solution for building a production-grade scraping robot. A reliable system shall give good visibility into the solution’s day-to-day operations, self-heal the transient errors, and provide tools to handle permanent errors. Developing a robust scraping solution requires handling many moving parts due to reliability issues intrinsic to the solution – Network traffic issues, frequent site content changes, and blockers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

June 2023

Categories |

RSS Feed

RSS Feed